Land Cover Mapping - Part 3 (Validation)

Published on Jun 04, 2020 | Bikesh Bade | 3827 Views

Model validation is the process of evaluating a trained model on the test data set. This provides the generalization ability of a trained model. we measure the effectiveness of our model with different validation methods. Among which Confusion Matrix is commonly used. And it is where the Confusion matrix comes into the limelight. Confusion Matrix is a performance measurement for machine learning classification. It is extremely useful for measuring Recall, Precision, Specificity, Accuracy.

How to Calculate the Confusion Matrix?

A confusion matrix is a summary of prediction results on a classification problem. The number of correct and incorrect predictions are summarized with count values and broken down by each class. This is the key to the confusion matrix. The confusion matrix shows the ways in which your classification model is confused when it makes predictions. It gives us insight not only into the errors being made by a classifier but more importantly the types of errors that are being made.

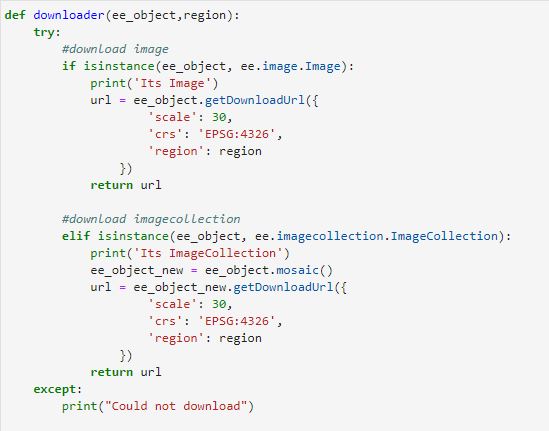

In Google Earth Engine, there is an inbuilt function for calculating the confusion matrix. But first, you have to create the validation for the classification module you have developed.

// The randomColumn() method will add a column of uniform random // Roughly 70% training, 30% testing. var split = 0.7; var validation = sample.filter(ee.Filter.gte('random', split)); // Classify the validation data. var validated = validation.classify(classifier); // Get a confusion matrix representing expected accuracy. var testAccuracy = validated.errorMatrix(label, 'classification');

The final step is to generate the following:

-

Overall Accuracy - tells us out of all of the reference sites what proportion were classified correctly.

-

Producer Accuracy - accuracy from the point of view of the maker

-

Consumers Accuracy - accuracy from the point of view of a user

-

Kappa Coefficient - statistical test to evaluate the accuracy of a classification

// Overall Accuracy var OA = testAccuracy.accuracy(); // Producers Accuracy var PA = testAccuracy.producersAccuracy(); // Consumers Accuracy var CA = testAccuracy.consumersAccuracy(); // Kappa Cofficient var Kappa = testAccuracy.kappa(); // Oder of class var Order = testAccuracy.order(); print(testAccuracy,'Confusion Matrix'); print(OA,'Overall Accuracy'); print(PA,'Producers Accuracy'); print(CA,'Consumers Accuracy'); print(Kappa,'Kappa'); print(Order,'Order');

Continue to Analysis

Responses

Arif

Hi! Thank you for the code. I have tried and I got "GetData" is not defined in this scope

- Jun 05, 2020 |